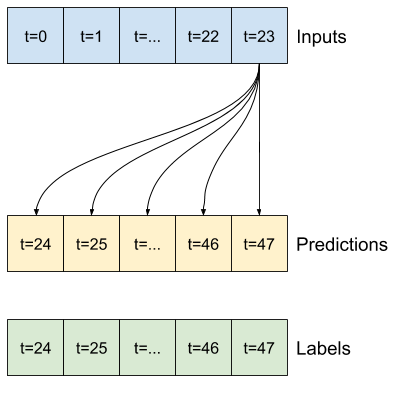

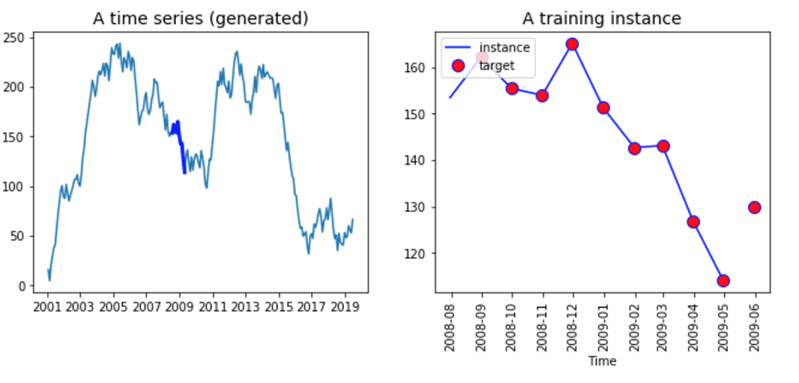

Generative adversarial networks (GANs) are an instance of generative models like the variational autoencoder we encountered in the last chapter. Such algorithms are also called discriminative models because they learn to differentiate between different output values. Global_average_pooling1d (Globa (None, 500) 0 tf._operators_.add_7ĭense (Dense) (None, 128) 64128 global_average_pooling1dĭropout_8 (Dropout) (None, 128) 0 denseĭense_1 (Dense) (None, 2) 258 dropout_8Ĥ5/45 - 26s 499ms/step - loss: 1.0233 - sparse_categorical_accuracy: 0.5174 - val_loss: 0.7853 - val_sparse_categorical_accuracy: 0.5368Ĥ5/45 - 22s 499ms/step - loss: 0.9108 - sparse_categorical_accuracy: 0.5507 - val_loss: 0.7169 - val_sparse_categorical_accuracy: 0.This book mostly focuses on supervised learning algorithms that receive input data and predict an outcome, which we can compare to the ground truth to evaluate their performance. Multi_head_attention_3 (MultiHe (None, 500, 1) 7169 layer_normalization_6ĭropout_6 (Dropout) (None, 500, 1) 0 multi_head_attention_3 Layer_normalization_6 (LayerNor (None, 500, 1) 2 tf._operators_.add_5 Multi_head_attention_2 (MultiHe (None, 500, 1) 7169 layer_normalization_4ĭropout_4 (Dropout) (None, 500, 1) 0 multi_head_attention_2 Layer_normalization_4 (LayerNor (None, 500, 1) 2 tf._operators_.add_3 Multi_head_attention_1 (MultiHe (None, 500, 1) 7169 layer_normalization_2ĭropout_2 (Dropout) (None, 500, 1) 0 multi_head_attention_1 Layer_normalization_2 (LayerNor (None, 500, 1) 2 tf._operators_.add_1 Tf._operators_.add (TFOpLambd (None, 500, 1) 0 dropout Multi_head_attention (MultiHead (None, 500, 1) 7169 layer_normalizationĭropout (Dropout) (None, 500, 1) 0 multi_head_attention Layer_normalization (LayerNorma (None, 500, 1) 2 input_1 Layer (type) Output Shape Param # Connected to This example, a GlobalAveragePooling1D layer is sufficient. A common way to achieve this is to use a pooling layer. Our model down to a vector of features for each data point in the currentīatch. Layers, we need to reduce the output tensor of the TransformerEncoder part of Multi-Layer Perceptron classification head. Transformer_encoder blocks and we can also proceed to add the final

The main part of our model is now complete.

shape, kernel_size = 1 )( x ) return x + res Conv1D ( filters = ff_dim, kernel_size = 1, activation = "relu" )( x ) x = layers. LayerNormalization ( epsilon = 1e-6 )( res ) x = layers. Dropout ( dropout )( x ) res = x + inputs # Feed Forward Part x = layers. MultiHeadAttention ( key_dim = head_size, num_heads = num_heads, dropout = dropout )( x, x ) x = layers. LayerNormalization ( epsilon = 1e-6 )( inputs ) x = layers. You can replace your classification RNN layers with this one: theĭef transformer_encoder ( inputs, head_size, num_heads, ff_dim, dropout = 0 ): # Normalization and Attention x = layers. Where sequence length is the number of time steps and features is each input Our model processes a tensor of shape (batch size, sequence length, features), permutation ( len ( x_train )) x_train = x_train y_train = y_train y_train = 0 y_test = 0 astype ( int ) root_url = "" x_train, y_train = readucr ( root_url + "FordA_TRAIN.tsv" ) x_test, y_test = readucr ( root_url + "FordA_TEST.tsv" ) x_train = x_train. loadtxt ( filename, delimiter = " \t " ) y = data x = data return x, y. Import numpy as np def readucr ( filename ): data = np.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed